If you are responsible for making real decisions in a manufacturing plant, whether that means approving capital, supporting production, troubleshooting failures, planning upgrades, or figuring out how to connect older equipment into a modern SCADA or MES environment, then understanding the Rockwell ecosystem is not optional. It matters because in a very large number of North American facilities, Rockwell is not simply one vendor among many. Rockwell hardware, Rockwell software, and Rockwell architecture choices often define what is practical from a maintenance standpoint, what is possible from an engineering standpoint, what your team can realistically support, and how far your site can go with modernization, standardization, and plant data initiatives. The real question is not which PLC family exists inside the catalog. The real question is what each one means for your facility in practice.

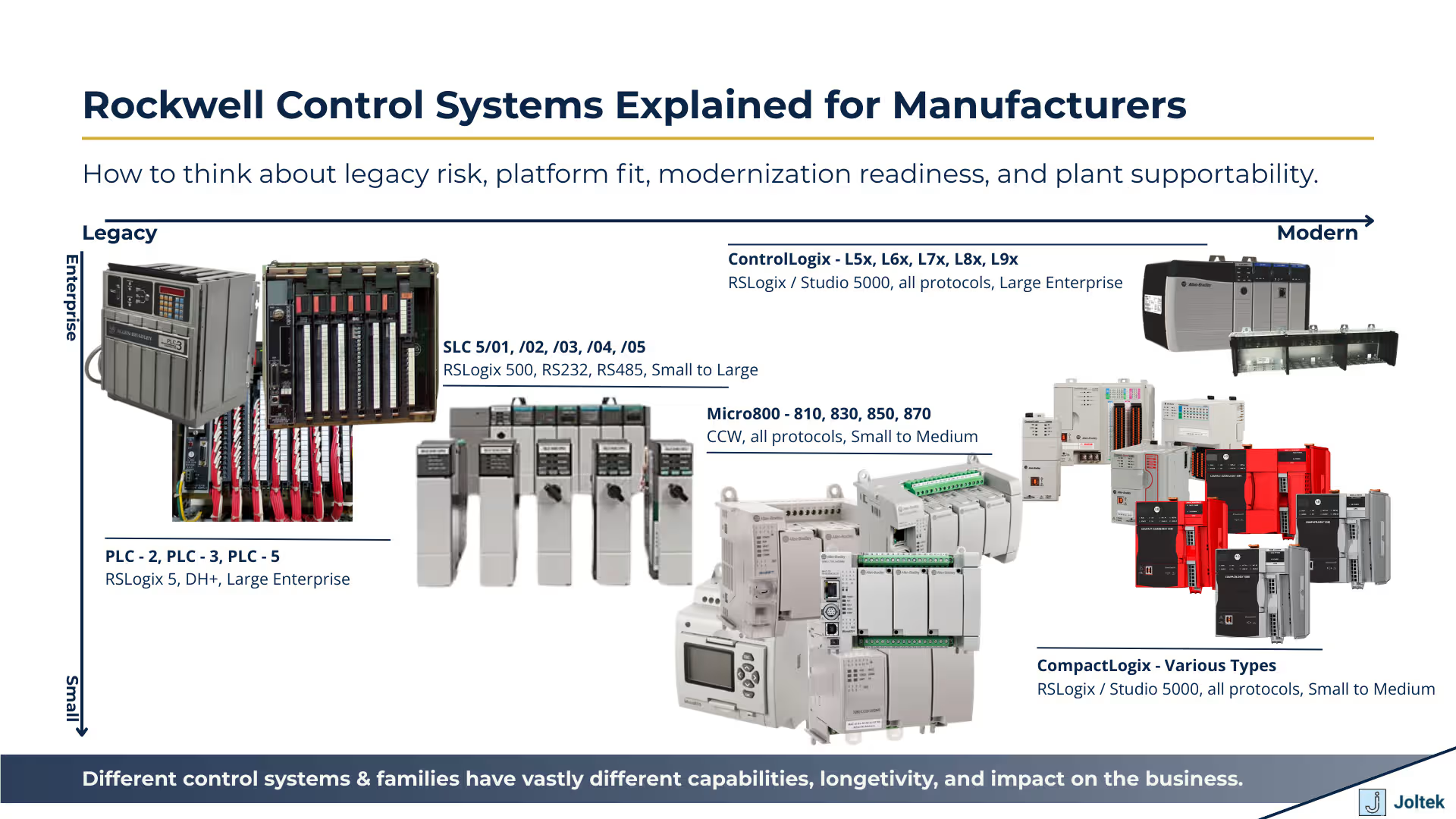

That is the lens we want to use for this article. We are not interested in giving you a surface level list of controller families and leaving it there. What matters much more is understanding how those platforms show up in the real world, especially when you are dealing with aging lines, mixed generations of equipment, new OEM machines entering the site, pressure to improve uptime, or leadership asking whether it is finally time to deal with obsolescence. The differences between PLC 5, SLC 500, MicroLogix, Micro800, CompactLogix, and ControlLogix are not just technical details for controls engineers to debate. Those differences affect spare parts strategy, software environments, networking decisions, long term supportability, the cost and complexity of migrations, and the ability of your plant to build something reliable and supportable over time.

Controls engineers need to understand the nuances, and maintenance teams need to know what they are inheriting when a machine arrives on the floor. But plant leadership also needs to understand the business implications of controller choices. Engineering managers need to understand where standardization creates real value and where inconsistency quietly adds cost. Teams working on SCADA, MES, historians, reporting, or broader industrial data projects need to understand that the quality of the control layer has a direct impact on everything that sits above it. In many plants, the conversation starts with dashboards, data collection, or modernization roadmaps, but the real constraint is still the underlying control system architecture.

That is why we wanted to put this article together. If you are trying to support legacy equipment, specify new systems, reduce exposure to obsolete hardware, or simply make better decisions about where your plant should go next, then you need a practical understanding of the Rockwell ecosystem. Not just what the families are called, but where they fit, where they create risk, where they still make sense, and where they become barriers to reliability, integration, and growth.

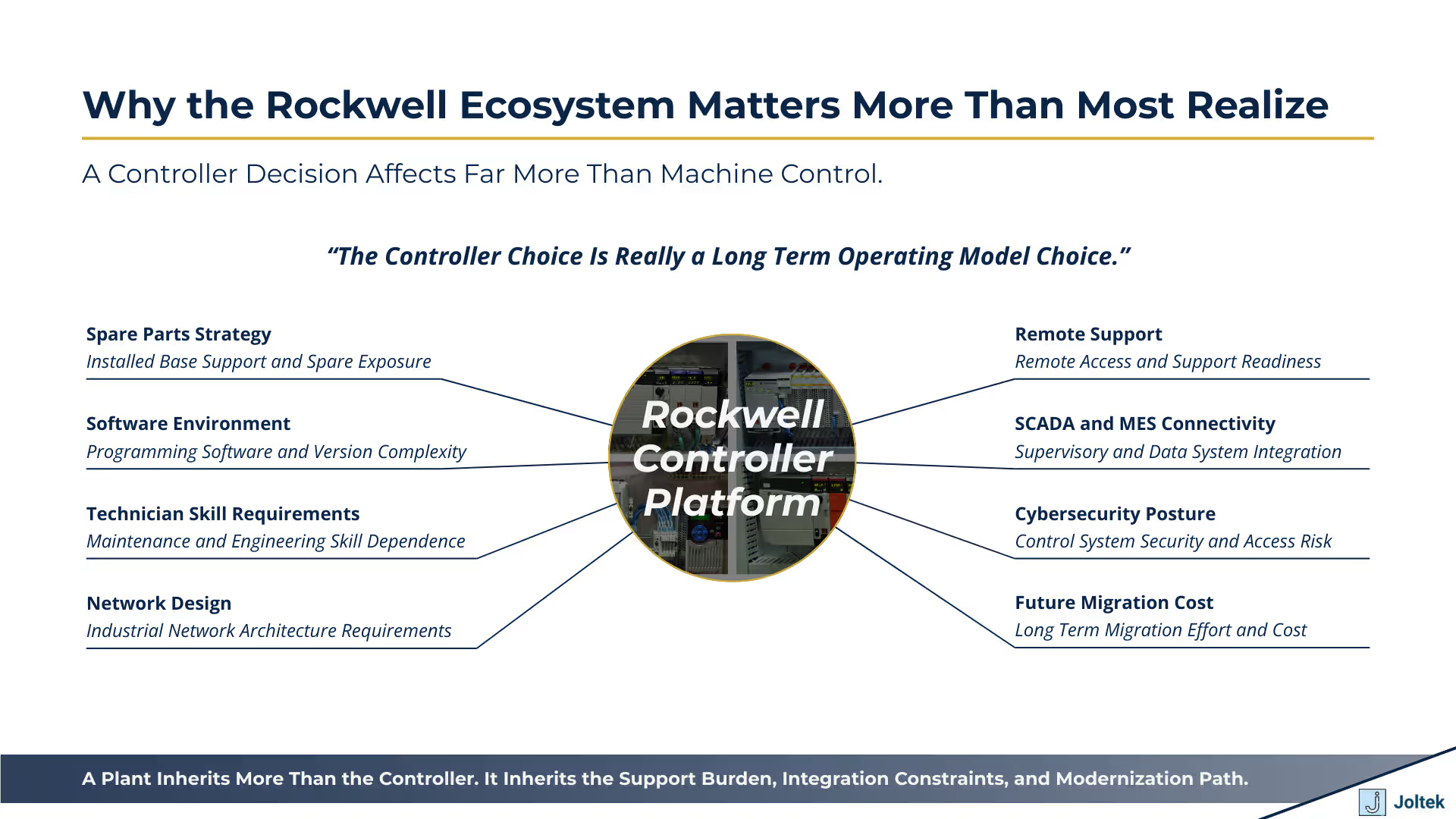

When people first talk about PLC selection, the conversation often stays too narrow. It becomes a discussion about machine control, I O count, processing capability, or what a programmer happens to know best. In the real world, especially inside a manufacturing facility with multiple lines, multiple vendors, and multiple generations of equipment, that is not how these decisions play out over time. A controller choice does not stop at the panel. It reaches into maintenance, engineering, IT, operations, procurement, and even leadership decision making. The controller you standardize on today shapes how expensive, supportable, and modern your plant will be tomorrow.

That matters because every platform carries a different support burden. It affects what spares you need to stock, how easy it is to source replacement parts, and whether a breakdown can be solved with parts sitting in your storeroom or whether someone is scrambling to find used hardware on the secondary market. It affects software licensing and access, which is one of the most overlooked issues in many facilities. A site may technically have the right controller installed, but if the required programming environment is locked to a few laptops, tied to outdated versions, or dependent on a shrinking number of people who know how to use it, then the site is not nearly as resilient as it appears on paper.

The controller decision also affects technician and engineer capability in a much more direct way than many teams realize. Some platforms are familiar to a broader pool of maintenance and controls people. Others require older software, older methods of troubleshooting, and a much deeper dependence on tribal knowledge. That becomes especially important when plants are already under pressure from retirements, turnover, and inconsistent training. A control system is not just a technical asset. It is also a capability requirement. If the people supporting it are scarce, overloaded, or dependent on memory instead of standards, then the risk profile of that system is far higher than leadership often assumes.

It also reaches into network design, remote support, SCADA connectivity, MES integration, cybersecurity posture, and migration cost. A controller that seems acceptable at the machine level may become a major bottleneck when the site tries to centralize data, segment networks, add secure remote access, or roll performance information up into plant wide systems. The farther a facility moves toward connected operations, the more obvious it becomes that controller choices are really architecture choices. That is one of the reasons we spend so much time thinking about them in the context of broader modernization strategy, not just panel design or programming preferences. For readers thinking about how to approach these decisions at a more strategic level, our post on Control System Modernization Strategy is a useful companion because it frames these technology choices within a larger business and operational roadmap.

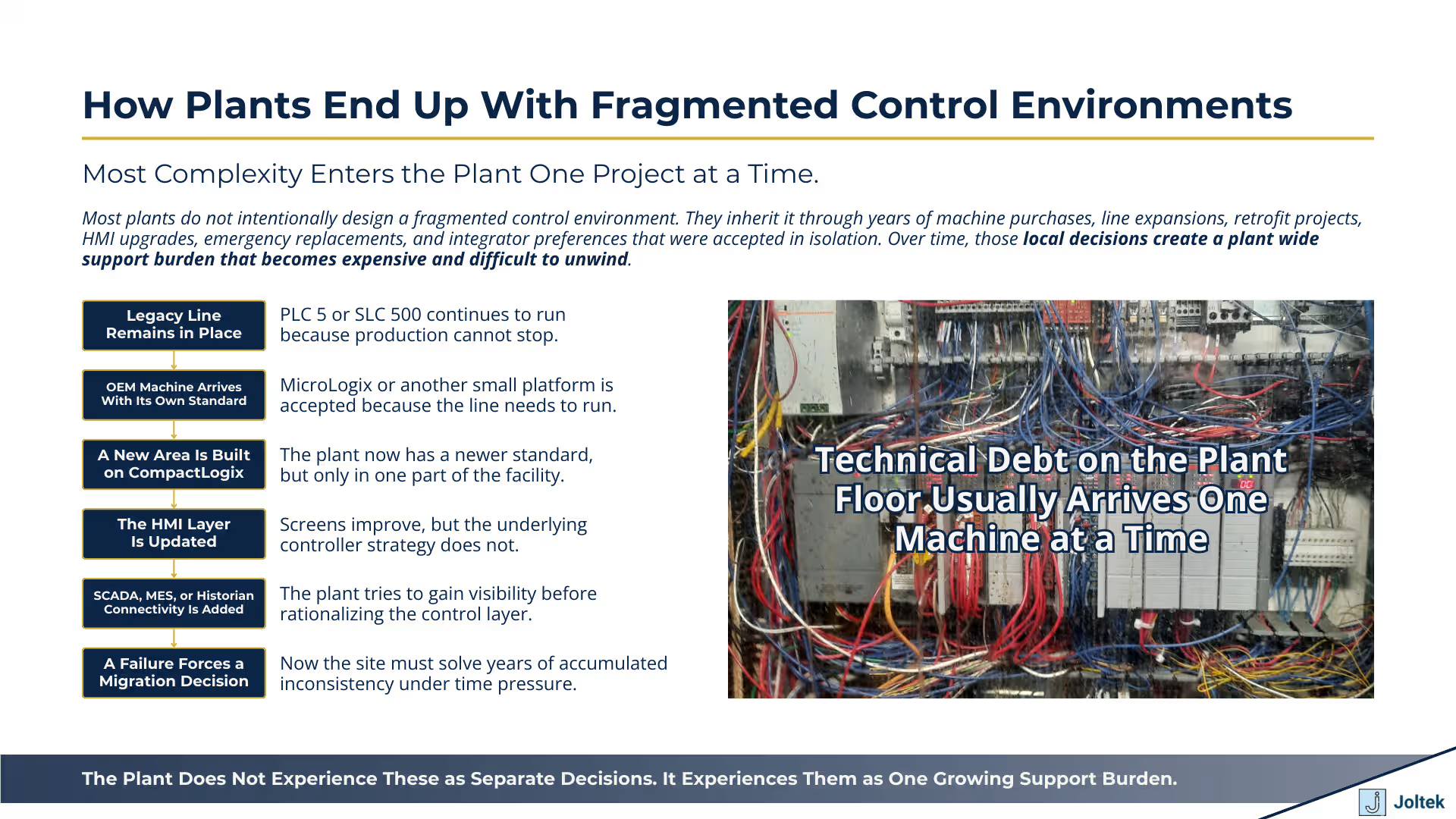

Most plants do not end up with a messy control environment because someone intentionally designed a bad standard. They end up there because decisions were made one project at a time, one machine at a time, and one emergency at a time. One OEM brings in a small machine with MicroLogix. Another builder prefers CompactLogix. A legacy production line still depends on SLC 500 or PLC 5. Someone updates the HMI layer to make the screens look better, but the controller underneath remains old and poorly documented. A new SCADA package gets deployed on top of a plant where the control layer was never truly rationalized. Every one of those decisions may look manageable in isolation. Together, they create a support model that becomes increasingly expensive and fragile.

This is where complexity quietly compounds. The site now needs multiple software environments, multiple communication approaches, multiple spare part strategies, and multiple assumptions about how equipment should be diagnosed and supported. Some controllers may be easy to connect into modern systems. Others require workarounds, protocol bridges, or special knowledge that only one or two people in the plant still carry. Maintenance teams inherit machines they did not specify. Engineering teams inherit architectures they would not choose today. Leadership inherits a capital planning problem that is harder to see because the pain is spread across downtime events, engineering hours, spare part inventories, outside contractor support, and delayed modernization projects.

Mixed generations of hardware also create a false sense of stability. A plant may look like it is running fine because production is still moving, but underneath that surface the site is accumulating technical debt. The older the environment becomes, the more likely it is that a future failure will trigger not just a repair, but an urgent and unplanned engineering project. At that point, the issue is no longer just one failed controller or one communication fault. The issue is that the plant waited too long to confront the fact that its control environment had become fragmented, inconsistent, and increasingly difficult to support as a system.

One of the biggest mistakes we see is that teams try to talk about SCADA, MES, historians, dashboards, and plant visibility as though those initiatives begin at the software layer. In reality, many of those efforts succeed or fail based on the condition of the control layer underneath. If the plant is running old controller families, outdated protocols, inconsistent naming conventions, fragmented network designs, and weak documentation, then the data layer will inherit all of those problems. The friction starts long before anyone builds a dashboard.

That is why this topic matters far beyond controls engineering. Plants are under more pressure than ever to improve uptime, make faster decisions, reduce downtime, strengthen cybersecurity, and make better use of production data. But data does not become useful just because it can be extracted. It becomes useful when the underlying systems are coherent enough to support reliable collection, interpretation, and action. If one part of the plant is built on modern Ethernet based architectures, another relies on legacy communication methods, and a third is only partially documented, then every effort above that layer becomes more difficult. SCADA projects take longer. MES integration becomes more expensive. Reporting becomes less trusted. Standardization stalls because the control systems themselves were never brought into alignment.

This is exactly why we believe modernization needs to start with a more disciplined assessment of the plant floor environment. Before teams ask what software to deploy or what dashboards to build, they should ask whether the controller ecosystem across the site is actually ready to support those ambitions. In many cases, the smartest modernization work begins by reducing variation, identifying obsolescence, clarifying network and protocol realities, and creating a plan that brings the control layer into a more supportable and scalable state. Once that foundation is stronger, the data and visibility initiatives above it become far more realistic and far more valuable.

If we start at the oldest end of the Rockwell landscape that still shows up in active production environments, PLC 5 deserves serious respect. It was a powerful control platform for its time, and more importantly, it was built for large and distributed industrial systems that had to run reliably for years. That is one of the reasons so many plants still have PLC 5 hardware in service today. In the era in which it was deployed, it gave manufacturers a robust architecture for broad machine and process control, and it fit naturally into environments built around Data Highway Plus, Remote I O, and legacy Rockwell software layers. Rockwell still documents DH+ as a factory floor network used for messaging and controller communications, which gives a sense of how foundational that architecture was for earlier generations of Allen Bradley systems.

The reason PLC 5 survived for so long is not hard to understand. These systems were often engineered conservatively, supported critical lines, and remained stable enough that many sites did not feel immediate pressure to replace them. In practice, if a plant had spare parts, people who knew the environment, and no urgent integration requirement, the controller could stay in service far longer than leadership originally expected. That historical durability is exactly why PLC 5 still appears in brownfield manufacturing. At the same time, Rockwell’s current replacement guidance and migration material make clear that the world around those systems has moved on, and that keeping them in place now creates an increasingly awkward relationship with current Logix architectures and modern support expectations.

That is where the real business issue starts. The cost of keeping PLC 5 alive is rarely visible in a single budget line. It shows up in secondary market purchases, limited hardware availability, a shrinking pool of technicians who are comfortable with the platform, and the engineering overhead required to bridge old communication methods into modern architectures. Even when a site can technically keep the controller running, it often cannot integrate it cleanly into contemporary SCADA, historian, cybersecurity, and remote support models without extra layers of complexity. In other words, the problem is not just that PLC 5 is old. The problem is that every year it stays in place, the plant becomes more dependent on exceptions, workarounds, and expertise that is harder to replace. A legacy controller can remain functional long after it stops being strategically supportable.

SLC 500 occupies a very important middle ground in the Rockwell story. It was more accessible than PLC 5 for many machine and line level applications, it became deeply familiar to generations of technicians and controls engineers, and it remained viable for a long period because it balanced capability, modularity, and relative simplicity. That is why so many manufacturing plants still depend on it. Even today, Rockwell continues to publish migration pathways from SLC environments into newer Logix based systems, which is a strong signal that the installed base remains meaningful and that customers are still working through these transitions.

One of the reasons SLC 500 lasted so long is that communication differences inside the family materially affected how useful a given controller was in a changing plant environment. Not every SLC was equally easy to integrate. The SLC 5/05 became especially important because Ethernet changed the equation. Once a site could connect a rack more directly into modern supervisory layers, that controller became a natural stepping stone for plants that wanted visibility or limited integration without immediately replacing the entire architecture. That migration logic was practical, and in many facilities it bought valuable time. Rockwell’s SLC migration references still reflect this reality by focusing on how existing hardware and wiring can transition toward CompactLogix based systems while preserving as much continuity as possible.

The danger is that partial modernization often becomes a permanent operating model. A plant upgrades just enough to connect an aging SLC rack into SCADA, maybe adds some visibility, maybe rationalizes one section of a line, and then stops. From a distance, it looks like progress. On the ground, the old rack is still there, the old logic assumptions are still there, the maintenance burden is still there, and the broader control strategy remains unresolved. This is one of the most common traps in brownfield manufacturing. Teams solve the connectivity problem before they solve the architecture problem. As a result, the plant gains temporary visibility but does not materially reduce technical debt. Over time, that creates a control environment that looks more modern from the HMI or SCADA layer than it actually is underneath.

Micro800 and MicroLogix are often grouped together by people who simply see both as smaller Rockwell controllers, but that framing hides an important distinction. Micro800 belongs to a different design mindset. Rockwell positions the Micro800 family within the Connected Components Workbench environment, and the official selection guide explicitly describes Connected Components Workbench as the programming and configuration environment for Micro800 controllers and related connected components products. That matters because it signals a smaller machine oriented ecosystem, a lower barrier to entry, and an approach aimed at cost effective standalone control rather than a direct continuation of the traditional Logix progression.

That makes Micro800 useful in the right context. For smaller standalone applications, simpler machines, and situations where cost sensitivity matters, Micro800 can be a very reasonable choice. It lowers the hardware and software barrier, and it can make sense for builders or end users who do not need the full architectural depth of the broader Logix family. But that does not mean it should be treated as a smaller version of CompactLogix or ControlLogix. It comes from a different software environment, a different ecosystem logic, and often a different support model. That distinction becomes especially important when a site is trying to standardize across multiple machine types. Standardization is not just about buying Rockwell. It is about deciding which Rockwell ecosystem the plant is actually committing to.

MicroLogix is different again. It sits much closer to the older RSLogix 500 world, and that alone shapes how it should be understood. MicroLogix can still be useful in legacy machines, and from a training perspective it remains valuable because it forces people to think more directly about memory, addressing, and lower level controller structure. For learning fundamentals, that is not a bad thing. In brownfield environments, it can also remain practical where the machine is stable, the support model is known, and there is no immediate business case to replace it. But for new standardization, MicroLogix is usually the wrong answer. It ties the site back into an older software and support paradigm when most plants would be better served by moving toward a more current and scalable architecture. The key point is simple: Micro800 and MicroLogix may both look small, but they belong to different eras, different toolchains, and different long term strategies.

CompactLogix is where many plants believe they have standardized, when in reality they have only standardized in name. This is one of the most important distinctions in the entire Rockwell ecosystem. The CompactLogix label covers multiple generations, different hardware assumptions, and different I O strategies. Rockwell’s current selection material distinguishes among CompactLogix 5370, 5380, 5480, and related Compact GuardLogix systems, while current product pages also indicate that older 1769 L3x controllers are discontinued and that migration toward the 5380 platform is recommended. That alone should tell teams that “we use CompactLogix” is not yet a sufficiently precise statement for plant standardization.

The practical difference between the older 1769 world and the newer 5069 world is not cosmetic. It influences controller capability, I O architecture, future replacement options, network design assumptions, and spare parts strategy. If one site carries older 1769 era hardware while another is buying into newer 5069 based platforms, the organization may think it has one standard when it actually has two support models. The field implications are significant. The spare parts cabinet changes. The migration path changes. The available controller families change. Even the logic around future machine purchases changes, because the plant now has to decide whether incoming OEM equipment should match the older installed base or the newer strategic direction.

This is where many machine level decisions are either strengthened or quietly undermined. CompactLogix can be an excellent fit for modern machine control, but only if the family, I O ecosystem, and deployment model are chosen intentionally. A site that aligns machine standards, network expectations, and controller generations can build a very supportable and scalable environment. A site that accepts any controller with the CompactLogix name on it may end up with fragmented standards that look unified only on paper. From a consulting perspective, this is often where the hidden cost of inconsistency enters the system. Not because CompactLogix is the wrong platform, but because the organization failed to define what it actually meant by CompactLogix standardization. In many plants, the real issue is not whether they chose CompactLogix. It is whether they chose one coherent version of CompactLogix.

ControlLogix remains the enterprise grade Rockwell platform for a reason. It is modular, flexible, and well suited to larger lines, batch systems, process applications, complex safety requirements, and architectures where multiple network, I O, motion, and control requirements have to live together in a coordinated way. Rockwell’s current literature continues to frame ControlLogix and the Logix 5000 controller family as a modular architecture with broad support for different communications and application needs, and that is exactly why it remains so common in larger manufacturing environments. When the process is complex, when modularity matters, or when future architectural flexibility is important, ControlLogix is still a very strong fit.

But owning a ControlLogix rack is not the same thing as having a sound ControlLogix architecture. That is where many teams underestimate the responsibility that comes with the platform. Because it is so modular, the quality of the end result depends heavily on the engineering decisions around it. Module selection matters. Network segmentation matters. Firmware planning matters. The generation of controller selected matters. There is a significant architectural difference between a plant that simply accumulated L5, L6, L7, L8, and now L9 era hardware over time and a plant that has intentionally rationalized its controller strategy around a supportable future state. Without that discipline, even a high capability platform can become fragmented and difficult to govern.

This becomes especially important in brownfield manufacturing, where ControlLogix often sits at the center of modernization initiatives. A site may be migrating out of older communication methods, replacing legacy controller families, integrating motion, updating safety, or connecting the line more tightly to SCADA and MES. In those situations, ControlLogix can absolutely be the right destination platform, but only if the plant treats architecture as a first class decision. That means planning generations intentionally, aligning firmware strategy, being disciplined about module and network design, and understanding how the rack fits into the broader plant standard. The value of ControlLogix is not just that it can do a lot. The value is that it can anchor a robust and expandable architecture when used with the level of rigor that the platform demands.

One of the most common mistakes we see in manufacturing is that controller decisions are made on the basis of familiarity rather than strategy. Someone in the plant knows a certain platform well. A systems integrator prefers a certain family because it fits their standard templates. A machine builder selects what they already use across their customer base. Procurement pushes toward what appears to be the lower cost option in the short term. None of those inputs are irrelevant, but none of them should be the starting point. If the goal is to make a sound decision for the facility, the first question should be much simpler and much more disciplined: where does this platform stand in its lifecycle, how available is it, how long can it realistically be supported, and what does the migration path look like when the time comes to move on.

That matters because many decisions that look reasonable at the project level become expensive at the plant level. A controller may work perfectly well today, but if it is mature, constrained in availability, increasingly dependent on used inventory, or already awkward to integrate into current architectures, then the plant is inheriting risk whether it acknowledges that risk or not. The problem is that this exposure is often invisible during procurement and painfully obvious during failure. What looked like a cost effective choice becomes a future emergency because the hardware is hard to source, the software environment is aging, and the people who know the platform are becoming harder to find. A sound controls decision begins with support horizon and lifecycle reality, not with habit.

This is why lifecycle status should be the first screen in any platform discussion. Before a site debates performance, cost, or programming preference, it should ask whether the controller family is active, whether associated modules are still current, whether spare parts are realistically obtainable, and whether the vendor has a clear and supportable path forward. That discipline changes the conversation. It forces teams to think in terms of operating life, replacement exposure, and long term architecture rather than short term convenience. It also gives leadership a much more honest picture of what the plant is really buying when it approves a machine or an upgrade.

Once lifecycle is understood, the next step is to widen the lens. In many plants, the controller becomes the headline item and everything around it gets treated as secondary. In practice, that is backwards. The processor is only one element of the architecture, and it is often not the element that creates the most friction over time. The real support burden of a control system usually sits in the surrounding ecosystem: local and remote I O, network modules, drives, HMI compatibility, SCADA connectivity, historian strategy, firmware stack, programming software, safety components, and the practical question of what the storeroom is now expected to carry. A platform decision that looks simple when reduced to the controller part number becomes far more consequential when assessed as a complete operating environment.

This is where a more strategic evaluation pays off. A team should be asking whether the controller fits the intended I O structure, whether the network approach is aligned with plant standards, whether the HMI and supervisory layers can interact with it cleanly, whether firmware management will be straightforward or painful, and whether the software environment fits the site’s actual support model. It should also ask whether the safety architecture is coherent, whether remote access can be handled appropriately, and whether the resulting design increases or reduces the number of hardware families and software tools the site must support. Those are not side questions. They are the difference between a plant that appears standardized and a plant that is actually supportable.

In well run facilities, architecture decisions are evaluated in terms of operational consequences, not just technical specifications. The point is not simply to ask whether the controller can run the machine. The point is to ask whether the entire architecture can be maintained, diagnosed, integrated, secured, and eventually migrated without creating unnecessary complexity. This is especially important in brownfield environments, where new equipment is rarely entering a blank slate. It is entering an existing support model with inherited constraints, existing software habits, established spare philosophies, and a wider plant data architecture that may already be under strain.

A surprisingly large amount of long term plant complexity enters through new equipment. A machine builder delivers a system that works, the line needs to run, the team is under schedule pressure, and the plant accepts whatever was specified because challenging it feels like it will delay startup. The immediate project moves forward, but the site quietly absorbs a new exception. Over time, enough of these exceptions accumulate and the plant no longer has a standard. It has a collection of tolerated differences.

This is one of the most expensive forms of technical drift because it rarely appears dramatic in the moment. One machine with a different controller family may not look like a serious issue. One separate software package may not appear burdensome. One different network approach may seem manageable. But maintenance does not experience those as isolated facts. Maintenance experiences them as another platform to learn, another failure mode to troubleshoot, another set of spares to carry, another set of assumptions about how data is structured, and another architecture that does not quite fit the rest of the site. What begins as convenience at procurement becomes complexity in operations.

That is why every incoming machine should be evaluated against a real plant standard. The questions should be explicit. Does it use an approved controller family. Does it align to the approved software environment. Does it follow the approved network approach. Does it fit the plant’s spare philosophy. Does it support the data integration method the site intends to use. If the answer to those questions is no, then leadership should treat that variance as a strategic decision, not an incidental one. A plant standard only has value if it is defended at the point where new complexity is trying to enter the system.

This is also where strong engineering governance distinguishes high maturity sites from reactive ones. Mature sites do not simply accept what builders prefer. They define what the facility can support and require incoming equipment to align accordingly. That does not mean every machine must be identical. It means the plant has a clear view of which differences are acceptable and which ones create downstream cost that far outweighs the convenience of letting the exception through.

Many manufacturing teams talk about visibility as though it begins with software. The conversation starts with dashboards, reporting, historians, MES, analytics, or plant wide data infrastructure. Those initiatives matter, but they often stall for a much more fundamental reason: the control layer underneath them was never designed, standardized, or cleaned up with those use cases in mind. The result is a familiar pattern. The business wants insight, the software team wants connectivity, and the plant floor environment responds with inconsistent controller families, inconsistent tag structures, legacy protocols, fragmented network paths, and equipment that was only ever designed to run locally rather than share reliable and structured data upward.

This is why the readiness of the control layer should be evaluated before ambitious data goals are set. A plant that has poor controller standardization, weak naming conventions, inconsistent architecture between lines, and large pockets of legacy communication will struggle to build trusted visibility no matter how strong the dashboarding tools are. Data can be extracted, but that does not mean it is coherent, contextualized, or easy to govern. In many facilities, what appears to be a reporting problem is actually a control architecture problem wearing a reporting mask.

From a practical standpoint, teams should ask whether the installed controller base supports modern connectivity expectations, whether data structures are reasonably consistent across equipment, whether network design allows clean integration into supervisory and historian layers, and whether the architecture is maintainable once those systems are in place. If the answer is no, then the right move is usually not to push harder at the dashboard layer. The right move is to first rationalize the control environment so that the data layer has something stable and supportable to sit on top of. That is where a lot of industrial modernization efforts either gain traction or lose momentum.

This is also where the broader Joltek perspective becomes relevant. We do not see data, SCADA, or MES as isolated software initiatives. We see them as outcomes that depend on the condition of the plant floor foundation. For readers who want to go deeper on that connection, our post on Unlocking Industrial Data in Manufacturing expands on the reality that useful plant data is created by sound architecture, not by visualization tools alone.

One of the most expensive misunderstandings in industrial automation is the belief that a migration begins and ends with replacing a controller. On paper, it can look that simple. A site has a PLC 5, an SLC 500, an older CompactLogix, or an aging ControlLogix processor, and the instinct is to treat the project as a hardware refresh. In reality, Rockwell’s own migration and conversion materials make clear that moving legacy logic into Logix based platforms is a structured engineering process that includes file preparation, conversion steps, controller and chassis configuration, I O mapping, and message configuration. In other words, the vendor documentation itself reinforces what experienced practitioners already know: this is not a part replacement exercise. It is a system redesign exercise with operational consequences.

That distinction matters because the controller sits at the center of a much larger set of dependencies. Once the project starts, teams quickly run into software conversion issues, firmware coordination, communications redesign, I O decisions, tag structure changes, HMI updates, SCADA impacts, testing requirements, and commissioning risks. Even if the logic can be converted, it still has to behave correctly inside a different architectural environment. Data types may need to be reviewed. Messaging may need to be rebuilt. Address based assumptions may need to be translated into a tag based model. Existing screens may still point to old structures. Supervisory systems may still expect legacy data organization. Every one of those items creates work, and every one of them affects project risk.

This is why strong migration teams treat the effort as a full lifecycle engineering program rather than a controller procurement task. The work has to begin with understanding the existing environment in enough detail to know what is truly being replaced, what can be carried forward, what should be redesigned, and what cannot safely be assumed. The controller may be the most visible part of the migration, but it is almost never the hardest part of the migration.

In many brownfield environments, the logic is not what forces the project. The network is. Older systems often continue to run adequately until a single communications dependency becomes impossible to support, impossible to replace quickly, or impossible to integrate into a broader plant architecture. That is why legacy networks so often become the real trigger for modernization. Data Highway Plus, DeviceNet, ControlNet, serial infrastructure, and the bridge devices that tie them into newer layers may all function for years with very little executive attention. Then one component fails, one scanner card becomes unavailable, one interface module creates instability, or one integration initiative reveals that the architecture has become too brittle to extend further.

Rockwell’s own current ControlLogix documentation still references support for a wide range of communication options, including EtherNet/IP, ControlNet, DeviceNet, Data Highway Plus, and Remote I O, which is a reminder of how many generations of plant networking still coexist inside active facilities. That coexistence is exactly what creates difficulty during modernization. The plant is not simply moving from one controller to another. It is often trying to unwind years of accumulated network decisions, bridge layers, and protocol compromises that were made for good reasons at the time but no longer fit a modern operating model.

In practice, this is where projects get more complicated than expected. A line may still run well enough on its legacy control hardware, but the network path around it has become fragile. DeviceNet can be stable for years until one node, one scanner, or one media issue turns troubleshooting into a prolonged outage. ControlNet may continue doing its job until the plant realizes that expansion, support, and integration are now awkward and increasingly specialist dependent. Data Highway Plus may remain functional in an older area until a broader connectivity initiative makes it obvious that too much of the site is still relying on a communication layer that was never meant to support modern visibility and cybersecurity expectations. We have seen this repeatedly in the field: what looks like a controller problem at the start often reveals itself to be a network architecture problem by the time the work is scoped correctly.

That is also why urgent projects tend to feel so expensive. The plant did not just lose a component. It lost the assumption that the surrounding architecture could continue unchanged. Once that assumption breaks, engineering has to make decisions quickly about protocol conversion, remote I O handling, field device connectivity, network segmentation, and how much of the old environment should be preserved versus redesigned. This is one of the reasons migration projects need more front end architecture work than many teams initially expect.

Modernization projects rarely fail because the team forgot that the hardware was old. They fail because the project underestimates how much bad structure has accumulated around the hardware over time. One of the most common traps is migrating logic without cleaning up years of poor tag naming, inconsistent descriptions, and weak documentation. The logic may technically run after conversion, but the plant inherits a newer platform wrapped around an older information model. That does not create a modern system. It creates a harder to troubleshoot version of the old one.

Another frequent trap is keeping legacy architecture assumptions inside a new platform. Teams move to a newer controller family, but preserve old communication workarounds, poor modular boundaries, or confusing I O organization because changing them feels too disruptive during the project. The result is a system that looks current by part number but behaves like a legacy design in all the ways that matter for support and scalability. Firmware compatibility is another area where projects get hurt. Teams often focus on getting the main controller upgraded while underestimating the interaction between controller revisions, module firmware, HMI software, supervisory platforms, and related devices. Those mismatches do not always appear during planning. They tend to appear under schedule pressure, closer to startup, when the cost of rework is much higher.

Remote I O conversion is also frequently treated as a secondary task when it should be central to the migration strategy. If the controller is modernized but the I O layer remains awkward, loosely documented, or dependent on transitional compromises, then much of the support risk remains in place. The same is true for testing and startup. Plants often budget for hardware and software effort, but not enough for FAT, SAT, cutover planning, fallback strategy, and the support required immediately after startup. There is also a persistent tendency to assume that moving to Ethernet resolves complexity by itself. It does not. Ethernet can absolutely improve flexibility and integration potential, but it can also expose poor segmentation, inconsistent standards, and weak data modeling if the architecture around it is not disciplined. The medium changed. The need for sound engineering did not.

A strong migration plan should create clarity before the plant enters the most expensive phase of execution. At minimum, it should include the following elements:

What makes this list valuable is not the checklist itself. It is the discipline behind it. A plant that has these items defined is much less likely to discover major architectural issues in the middle of a shutdown window. It is much less likely to underestimate the interaction between controller migration and downstream supervisory systems. It is much less likely to confuse a hardware refresh with a genuine modernization effort. That last point matters more than most teams realize, especially when visibility and data use cases are part of the business case. For readers who want to think more deeply about how controller behavior, data collection, and supervisory systems interact, our post on PLC Scan Cycles and Polling is a relevant extension of this discussion.

At a higher level, a good migration plan should reduce surprises, not just define tasks. It should make clear what the plant is standardizing toward, what it is retiring, what technical debt is being removed, and what risk remains after startup. That is ultimately the real measure of a successful modernization. Not whether the new controller powers up, but whether the plant ends up with a control environment that is materially easier to support, easier to integrate, and better aligned with the next decade of operational needs.

At the end of the day, understanding the Rockwell ecosystem is not about memorizing controller families, software names, or part number differences for their own sake. It is about making better plant decisions. That is the point that matters most. When a site understands where PLC 5, SLC 500, MicroLogix, Micro800, CompactLogix, and ControlLogix actually fit, it becomes much easier to decide what should be retained, what should be standardized, what should be migrated, and what should never be specified again. The value of understanding the Rockwell ecosystem is that it improves decision quality across operations, engineering, maintenance, and modernization.

That decision quality has very real consequences. The right controller strategy reduces downtime risk because the plant is less dependent on obsolete hardware, fragile network layers, and a shrinking pool of specialist knowledge. It simplifies support because teams are not trying to maintain an uncontrolled mix of platforms, software environments, and spare part philosophies. It improves data access because supervisory systems, historians, and MES layers have a cleaner and more consistent control foundation to build on. And it creates a much better path for modernization because the facility is no longer trying to layer new expectations on top of architectures that were never designed to support them.

The wrong strategy creates the opposite result. It leaves the site with fragmented standards, uneven supportability, and technical debt that gets more expensive every year it remains in place. It creates hidden risk in spare parts, in software access, in network infrastructure, and in the basic question of who can actually support the system when something fails. It also creates expensive surprises. What looks manageable in normal operation can become a major problem during an outage, a capital project, a new machine integration, or a plant wide push for better visibility and data use.

That is why Rockwell hardware should never be evaluated in isolation. A controller is not just a controller. It sits inside a broader operating context that includes lifecycle status, plant standards, network design, software environment, support model, spare parts strategy, and the business goals the facility is trying to achieve. When those elements are considered together, better decisions follow. When they are ignored, even good hardware choices can turn into bad plant outcomes.